At a high level, Docker Networking comprises 3 major components:

- The Container Network model (CNM)

- libnetwork

- Drivers

CNM is a network specification that defines how containers connect and communicate across different environments.

libnetwork is Docker’s implementation of the CNM. (Think of CNM as the architectural drawing, and libnetwork is the builder’s implementation of that drawing).

Drivers extend the CNM by implementing network topologies such as VXLAN overlay networks

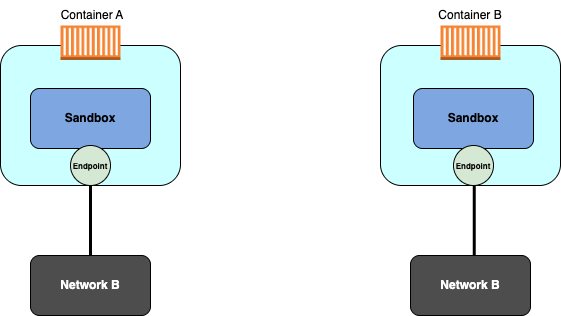

It defines the following core constructs:

- sandboxes

- endpoints

- networks

The sandbox is an isolated namespace/stack for a container. It includes the network interfaces, routing tables and DNS config

Endpoints are virtual network interfaces. In the CNM, their role is to connect the sandbox to a network

Networks are a software implementation of a switch. Endpoints terminate on these networks. As a result, connectivity between containers are determined by what networks the endpoints connect to.

The image below gives a representation of how these constructs work together:

These endpoints that facilitate connectivity to a network behave like regular network adapters, meaning they can only be connected to a single network. If a container needs connecting to multiple networks, it will need multiple endpoints.

As mentioned earlier, libnetwork is the implementation of the CNM design documentation, used by Docker.

In the early days of Docker, all networking code existed in the Docker daemon. The daemon became bloated and difficult to use/manage. As a result, everything got torn up and refactored into an external library called libnetwork based on the principles of CNM. Now, all the core networking code for Docker lives in libnetwork.

While libnetwork implements the control plane and management plane functions for Docker networking, drivers implement the data plane. Connectivity and isolation is handled by drivers. So is the actual creation of the networks.

Docker ships with several built-in drivers, known as native drivers or local drivers. On Linux, they include bridge, overlay and macvlan. On Windows, you have nat, overlay, transparent and l2bridge.

libnetwork allows multiple network drivers to be active at the same time.

Single-host bridge networks

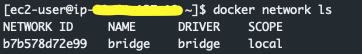

This is the simplest type of Docker network. Docker on Linux creates single-host bridge networks using the built-in bridge driver. This network is also called ‘bridge‘.

By default, this is the network that all containers on a Linux host will be connected to unless you override it on the command line with the –network flag. As shown in the screenshot below, the docker network ls command shows the network name and the driver are called bridge.

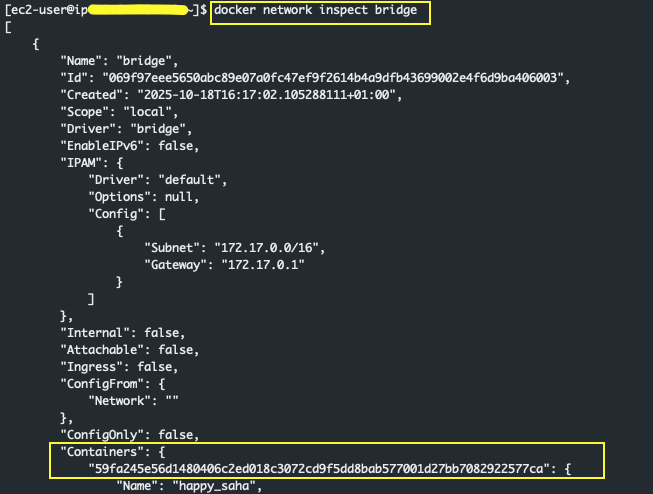

The docker network inspect <network type> command also provides valuable network information. For example, the command below shows a snippet of the bridge network. Notice it includes information about the containers using that network:

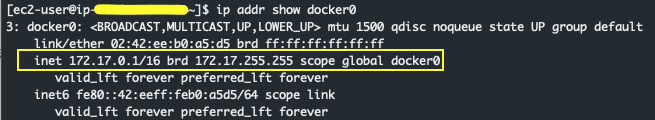

The default ‘bridge’ network created by Docker, maps to an underlying Linux bridge in the kernel called ‘docker0’. This can also be seen in the output of docker network inspect.

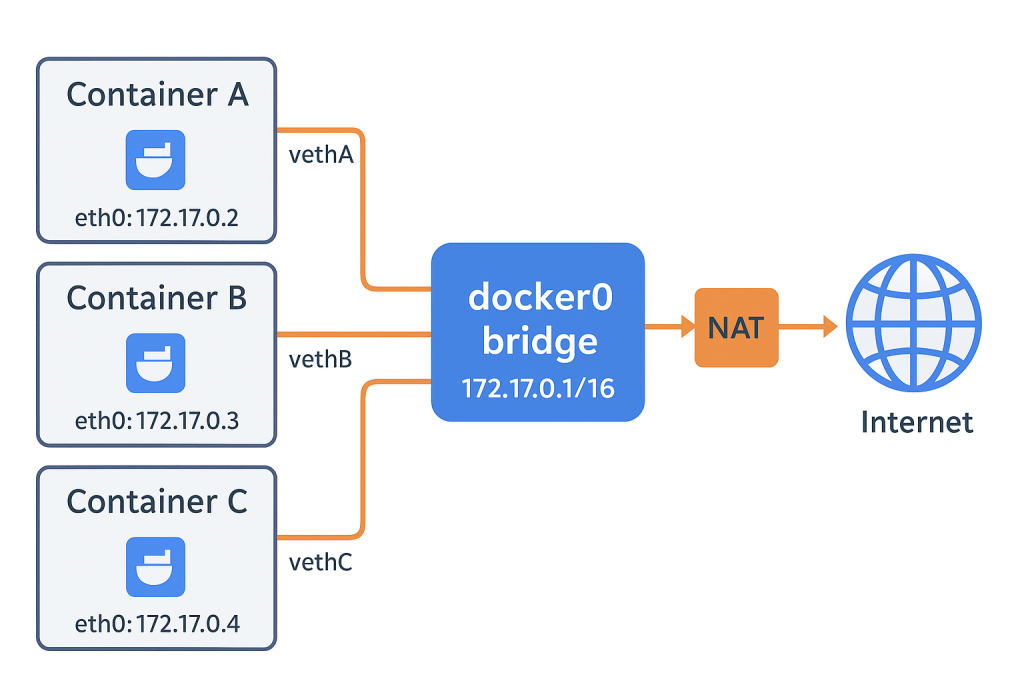

When Docker is installed, Docker automatically creates a Linux bridge interface called docker0.

This virtual bridge (you can think of it as a virtual switch) is used by Docker’s default “bridge” network to connect containers to each other and to the host.

If you create a different user-defined bridge network, Docker will create another Linux bridge interface. That bridge serves as a virtual switch for containers attached to that custom network, allowing them to communicate with each other and with the host — isolated from containers on other networks.

The diagram below shows how the containers integrate with docker0:

As shown in the image above, docker0 has an IP address of 172.17.0.1/16. This can be verified using the command ‘ip addr show docker0’

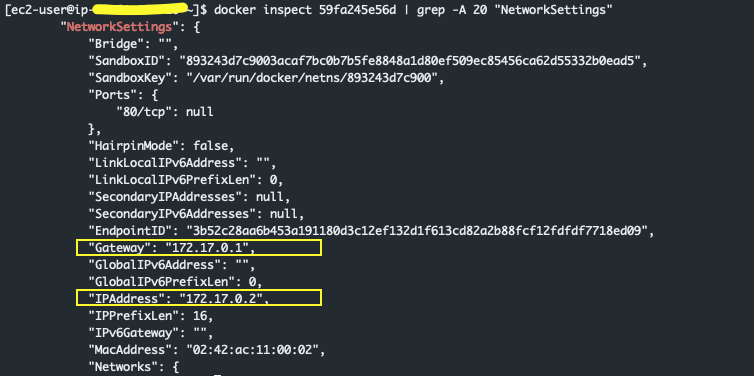

Any container in the default bridge network now uses docker0’s IP address as its default gateway. An example is shown in the snippet below where the IP address of my container is 172.17.0.2, and its gateway is 172.17.0.1

To create your own bridge network (separate from the default bridge network in Docker), the syntax below is used:

docker network create \

--driver bridge \

--subnet 192.168.100.0/24 \

--gateway 192.168.100.1 \

my_custom_bridge

If I wanted to provision a new container called my-nginx to join the newly created network, I’ll then use the command below. In the snippet below, I’m also binding port 80 on my container to port 8080 of the Docker host

docker create --name my-nginx \

--network my-custom_bridge \

--publish 8080:80 \

nginx:latestHost Networks

In the host network mode for a container, the container’s network stack isn’t isolated from the Docker host. In this mode, the container shares the host’s networking namespace, and the container doesn’t get its own IP-address allocated. For instance, if you run a container which binds to port 80 and you use host networking, the container’s application is available on port 80 on the host’s IP address.

This network type is useful for when you want to optimise performance.

To attach my container to the host network, the syntax is below:

docker run -d \

--network host \

--name web_srv01 \

nginx:latest

If my container application in the above snippet is listening on port 80, then to access the container application, I just need to connect to the IP address of my Docker host on port 80 to access the application

Macvlan networks

You might have a requirement for your container to communicate with other hosts in the same VLAN, that are based on the physical network. In this situation, the macvlan driver, which is used to create macvlan networks in Docker, can be used to assign unique MAC addresses to the virtual network interface of each container that needs to communicate with hosts on the physical network.

NOTE: The macvlan driver is used to create networks of the same name (i.e macvlan network), similar to how the bridge driver is used to create bridge networks.

When creating a macvlan network, you can pass in additional information to ensure the containers join the correct network, pick up the correct IP address range, know their default gateway (which will typically an address on the physical network) and are placed in the correct vlan.

The command below will create a macvlan network, and define some additional information including the subnet the containers will be in, as well as the IP range that the containers can be assigned IP addresses from using the –ip-range flag. This can be helpful to ensure the containers don’t unintentionally pick up an IP address range in the same VLAN that is already in use by a host on the physical network.

docker network create -d macvlan \

--subnet=10.1.1.0/24 \

--ip-range=10.1.1.0/25 \

--gateway=10.1.1.1 \

-o parent=eth0.100 \

macvlan_net100The -o parent=eth0.100 flag is used to create a sub-interface. MACVLAN uses standard Linux sub-interfaces, and so they have to be correctly tagged with the VLAN ID of the physical network. In this case, we’re connecting to VLAN100 on the physical network so the sub-interface is tagged with .100

Service Discovery

Apart from core networking, libnetwork also provides important network services such as DNS.

Service Discovery in Docker allows containers to locate each other by name as long as they are on the same network. This is achieved by leveraging Docker’s embedded DNS server and the DNS resolver in each container. Here is how it works:

- Let’s say a container, “c1”, is trying to ping another container, “c2”, which shares the same network

- “c1” queries its local DNS resolver for the name, “c2” in order to resolve its IP address

- If the local resolver doesn’t have an IP address for “c2” in its local cache, it initiates a recursive query to the Docker DNS server. Each local resolver is pre-configured to know how to reach the Docker DNS server

- The Docker DNS server holds name-to-IP mappings for all containers created with the –name or –net-alias flags. This means the Docker DNS server knows the IP address of “c2”

- The Docker DNS server returns the IP address of “c2” to the local resolver in “c1”, which returns the response of the query back to “c1”

- “c1” now has the IP address of “c2”, and is now able to successfully ping its IP address

For more detailed information around Docker networking, see the Docker documentation here.